Design Space Exploration

Engineers are faced with many options when they are considering the design of some product. They may have to select physical parameters such as the size of the product's wheel or the number and position of it's sensors. They may also have to choose between cyber parameters such as the architecture of a controller, the choice of algorithm or sampling rate and clock speeds. These all come under the umbrella of what we would call design parameters and the objective of the engineer is to choose a set or sets of these parameters that best meet the needs of the product stakeholders. We term the number of combinations of parameters as the size of the design space and the DSE challenge arises when the design space is huge and so how can the engineer make an informed decision about which parameters to choose?

The term Design Space Exploration (DSE) refers to the activity of evaluation of the performance resulting from different combinations of parameters (designs) in order to determine which parameter combinations are 'optimal'. The activity may be driven manually, with the engineer choosing sets of parameters based upon intuition or observations from previous simulations and then recording the performance afforded by the new parameters, however in INTO-CPS we advocate a tool supported, automated approach. The tool support may largely be divided into two parts: search algorithm and objective evaluation.

WANT TO LEARN MORE

Take a look at the following project deliverables:

- D3.5 – Examples Compendium 2

- D5.2d – DSE in the INTO-CPS Platform

Search

Search algorithms fall into one of two categories, open-loop and closed-loop. In an open-loop algorithm, the set of parameter combinations to evaluate is determined at the start of an exploration and continues, unchanged, regardless of the results obtained by the simulations. Exhaustive search is an example of and open-loop algorithm in which all combinations of parameters are evaluated. The benefit of such a search is that the best combination of parameters will be found, however it may not be realistic to consider evaluating all combinations due to limitations on simulation resources.

Closed-loop approaches acknowledge the problem of design space size and attempt to find good sets of parameters without having to evaluate the performance of all combinations. They do this by making use of the results of the simulations already performed to guide the choices of which designs to evaluate next. One example of a closed-loop algorithm is a genetic search, where, after an initial set of designs have been evaluated the algorithm creates new designs by mixing the parameter values from the 'best' designs it has seen so far and then evaluates those. This loop of determining the best designs so far (ranking) and then creating new designs based upon them continues until either no further improvement in performance is seen or the budget for running simulations has been used up. The advantage of such a closed-loop approach is that it can be used on much larger design spaces than an exhaustive search, however the cost of this is that it is not guaranteed to return the globally optimum designs, but may instead give a 'local' optimum.

Objectives

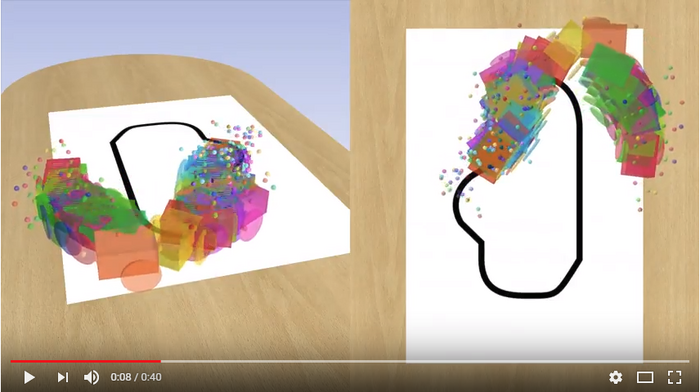

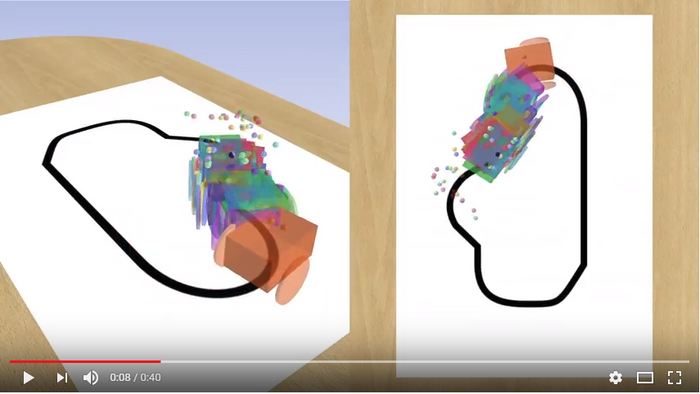

The second part of DSE is objective evaluation. The INTO-CPS tool chain includes methods for visualising the behaviour of the system under test either via live view graphs plotting variable values during a simulation, by viewing a 3D animation of the system or by processing the raw simulation results recorded by the INTO-CPS Co-simulation Orchestration Engine. Simulation results in these forms are not directly useful for the DSE process since to be able to compare the results of two or more designs we need some values that characterise the performance of the system against some goals, these we term objective values. Examples of objective values could be the total energy consumed by a building heating system or perhaps a measure of building user discomfort as a result of the building not being at the desired temperature. Thus the DSE support in INTO-CPS allows the user to describe bespoke scripts that can process the raw simulation results of a simulation and obtain the objective values for that design. The user may also specify if the DSE should attempt to maximise or minimise the value of each objective.

It is rare that a system will be evaluated using only a single objective value, it is much more likely that there will be multiple objectives and that there will be a tension between these objectives meaning that improving one will be at the detriment of another. Returning to the example of the building where we measured energy consumed and user discomfort, both of which we would want to minimise. We might imagine minimising the user discomfort by maintaining the building at the required temperature at all times, however this would increase the energy cost since it would result in heating the building when it is not occupied. On the other hand we could reduce energy costs by only heating the building when someone enters, however this would result in a long period of user discomfort while the building heats up in the morning. Thus there is a need to allow engineers to ‘trade off’ between antagonistic objectives and in INTO-CPS we support this by using Pareto analysis as the basis for ranking. Here the user is able to describe which objectives they wish to trade off and whether the objective values are to better when maximised or minimised.

Democratisation

We have found that even with closed-loop search the computing time needed to perform DSE is still significant. To address this and to democratise the DSE process for smaller organisations we have been adding cloud support to the scripts. Making use of the HT-Condor system to distribute jobs, we have successfully deployed and run many parallel simulations, significantly reducing the time to complete a DSE. Current efforts in this area are directed towards completing the automation of the submission and retrieval of simulations along with strategies for handling straggler simulations that either fail to start or don’t complete in a reasonable time. These modified scripts will be available for download in the INTO-CPS application in the final quarter of 2017.